How do our brains decide who we like... and who we don’t?

When we face discrimination, our brains interpret the experience as stress. Discrimination—or even the anticipation of it—can put us at a heightened level of vigilance. Over time, this can cause chronic stress, contribute to physical symptoms like high blood pressure and clogged arteries, and even help generate long-term changes in brain structure that contribute to anxiety, depression, and addiction.

This is a widespread concern. In an online survey by the American Psychological Association, 61 percent of respondents said they experience discrimination daily—and the numbers were higher for nonwhite populations. But while the targets of discrimination experience understandable stress—what’s going on in the brains of those doing the discriminating? Prejudice and bias do not help us survive in today’s world. But scientists believe the underlying behavior—the tendency to categorize the world around us into groups—does have a genetic benefit. Our ancestors survived because they learned to group spiders, snakes, and mushrooms into a category labeled “dangerous.”

The evolutionary benefits of grouping extended to people, too. By banding together with others, early humans protected themselves from attacking out-groups. Social neuroscientist David Amodio explained in a paper that today, our 21st-century brains continue to distinguish between “us” and “them,” contributing to conflict, inequality, and even war and genocide. We are quick to exhibit favoritism toward those in our in-groups. Babies as young as six months are more likely to track the gaze of an adult of their own race. But that doesn’t mean racial bias is inborn—it’s not. Instead, one of the researchers wrote in a press release that the findings “point to the possibility that racial bias may arise out of our lack of exposure to other-race individuals in infancy.” As adults, we continue to show favoritism toward our own groups—even when those groups are arbitrary or meaningless, such as being assigned the same random letter in a study. We have all heard people take pride in their city, school, ancestry, or family. One study—which found that college students are more likely to wear university-branded clothing on the days following a football win—pointed out that people will go so far as to boast that their state has produced the most vice presidents. We may no longer need physical protection from attackers, but humans continue to seek out—and show preference for—our in-groups.

In a 2016 study, participants playing an online game were led to believe they witnessed one player steal from another. In some cases, participants believed they had something in common with the thief—either they supported the same football team or were from the same country. In other cases, they believed the thief was associated with a different football team or country than their own. When given the opportunity to punish the cheater by withdrawing some money, participants who made their decision quickly were more harsh toward those with whom they did not share something in common. But there is more to discrimination than an unconscious preference toward members of our in-groups. As our brains categorize people into groups, we also tend to associate groups with certain societal-reinforced characteristics or stereotypes, such as associating Asian cultures with strong work ethics or women with childcare. The instant, unconscious association of certain groups with certain stereotypes or characteristics is called implicit bias. Implicit bias may be at work when a hiring manager instinctively views a tall male candidate as a promising leader, or when a police officer mistakes a wallet for a gun in the hand of a black man.

Neurologists can actually observe implicit bias in action. When a person exhibits bias, MRIs reveal activity in the amygdala, the “emotional center” of the brain that reacts to fear and threats. In the absence of an MRI, a well-known test for measuring the strength of automatic associations is the Implicit Association Test (IAT), which can be taken online. When taking a version of the IAT focused on race, we are asked to quickly associate black faces with “positive” words and white faces with “negative” words. The test is then repeated, with the pairings switched. Most users complete the IAT more quickly when pairing white faces with positivity—and show more hesitation when told to sort black faces with positivity. This is often true even among subjects who don’t believe they hold any bias. For example, you might strongly believe that women are crucial to the workforce (and be a working woman yourself), yet still associate words like “executive” and “business” more quickly with men than with women. There is criticism of the IAT—specifically, that an individual’s score is likely to change significantly on a retest, and that it does not seem to actually measure a participant’s likelihood to act in a discriminatory manner. But proponents believe the test can be valuable for understanding our immediate, unconscious biases.

Read more: Why is mental health more stigmatized in minority communities?

And understanding our own biases can be an important first step to overcoming them. In 2014, researchers tested 18 different methods for reducing bias on the IAT. In the most effective iteration of the study, participants read a second-person story describing a scene where they were violently assaulted by a white man—and then rescued by a black man. After reading the story, participants showed 48 percent less implicit racial bias on the IAT. In another iteration of the study, participants showed a 40 percent decrease in implicit racial bias after playing dodgeball with black teammates, against a team of cheating white players. And remember the experiment where participants believed they were witnessing another player cheat in an online game—and then proceeded to punish cheaters more harshly if they belonged to an out-group? Not everyone showed bias in that study either. Participants who stopped and thought rationally before making a decision doled out punishments more equally. You can work on decreasing your implicit biases by acknowledging the ones you do harbor, and by making an effort to expose yourself to people who don’t fit the stereotypes. Studies found decreased in-group bias and intergroup prejudice after participants empathized with a movie character of another race, thought about positive and well-known people of another race, or even just interacted with people of other races. Implicit bias is a significant problem with real-life consequences, but the good news is there are ways to work on nudging your bias—and they don’t all require a team of cheating dodgeball players.

Prejudice and bias do not help us survive in today’s world.

Katie Rose Quandt

Participants who stopped and thought rationally before making a decision doled out punishments more equally.

Katie Rose Quandt

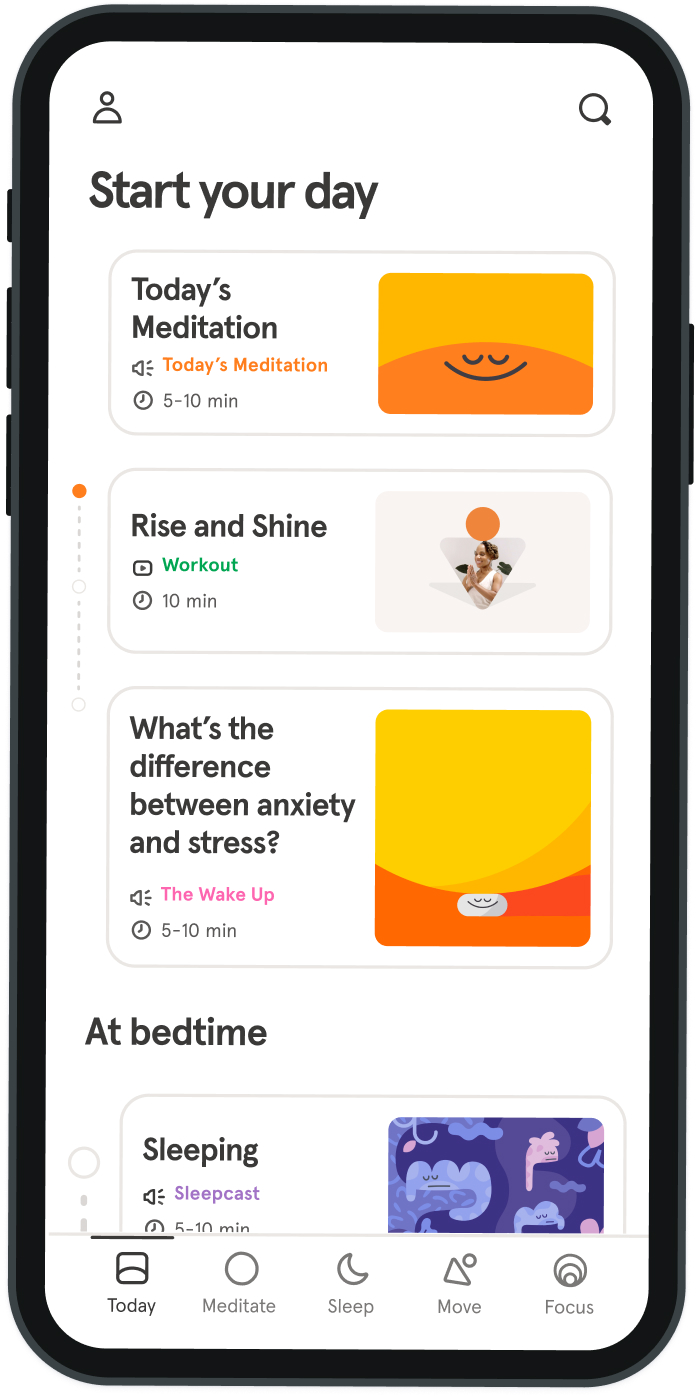

Be kind to your mind

- Access the full library of 500+ meditations on everything from stress, to resilience, to compassion

- Put your mind to bed with sleep sounds, music, and wind-down exercises

- Make mindfulness a part of your daily routine with tension-releasing workouts, relaxing yoga, Focus music playlists, and more

Meditation and mindfulness for any mind, any mood, any goal

Stay in the loop

Be the first to get updates on our latest content, special offers, and new features.

By signing up, you’re agreeing to receive marketing emails from Headspace. You can unsubscribe at any time. For more details, check out our Privacy Policy.

- © 2025 Headspace Inc.

- Terms & conditions

- Privacy policy

- Consumer Health Data

- Your privacy choices

- CA Privacy Notice